ContextRAG

Give LLMs the Context They Need

A Context Extension Platform for AI Systems

Give LLMs the Context They Need

A Context Extension Platform for AI Systems

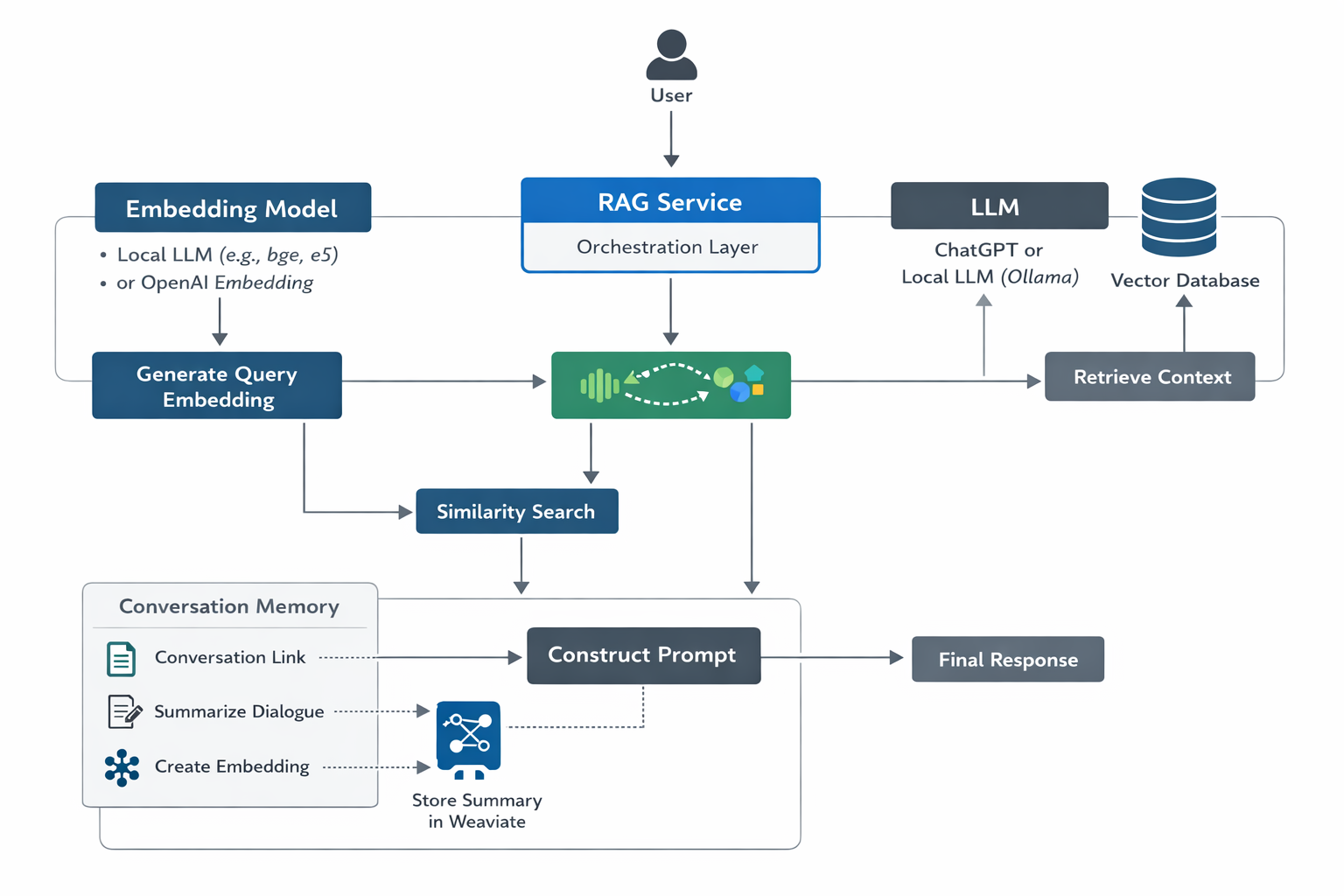

ContextRAG sits between developers and Large Language Models. It retrieves relevant project knowledge and augments prompts so AI systems can generate responses grounded in real project context.

Large Language Models like ChatGPT, Claude, and Gemini lack awareness of the developer’s project environment.

ContextRAG retrieves project knowledge from a vector database and combines it with user queries before sending prompts to LLMs. This enables AI systems to generate responses grounded in real project context.

Use OpenAI, Claude, Gemini or local models via Ollama.

Project documentation and code become searchable context.

Developer discussions become structured knowledge.

Kafka pipelines ingest documentation, repositories and logs.

Developer asks:

"How does the Kafka ingestion pipeline work in this project?"

ContextRAG retrieves architecture documentation and past developer conversations, augments the prompt, and allows the LLM to generate an answer grounded in real project knowledge.

This project is being built step-by-step in public. Follow the journey as the platform evolves from prototype to full developer intelligence system.

ContextRAG is currently being built. Follow development and explore related projects.

View GitHubContextRAG aims to become the contextual intelligence layer for AI systems — allowing AI to understand software architectures, codebases, developer discussions and production systems.